AI to the Desktop: The Coming Hybrid Architecture

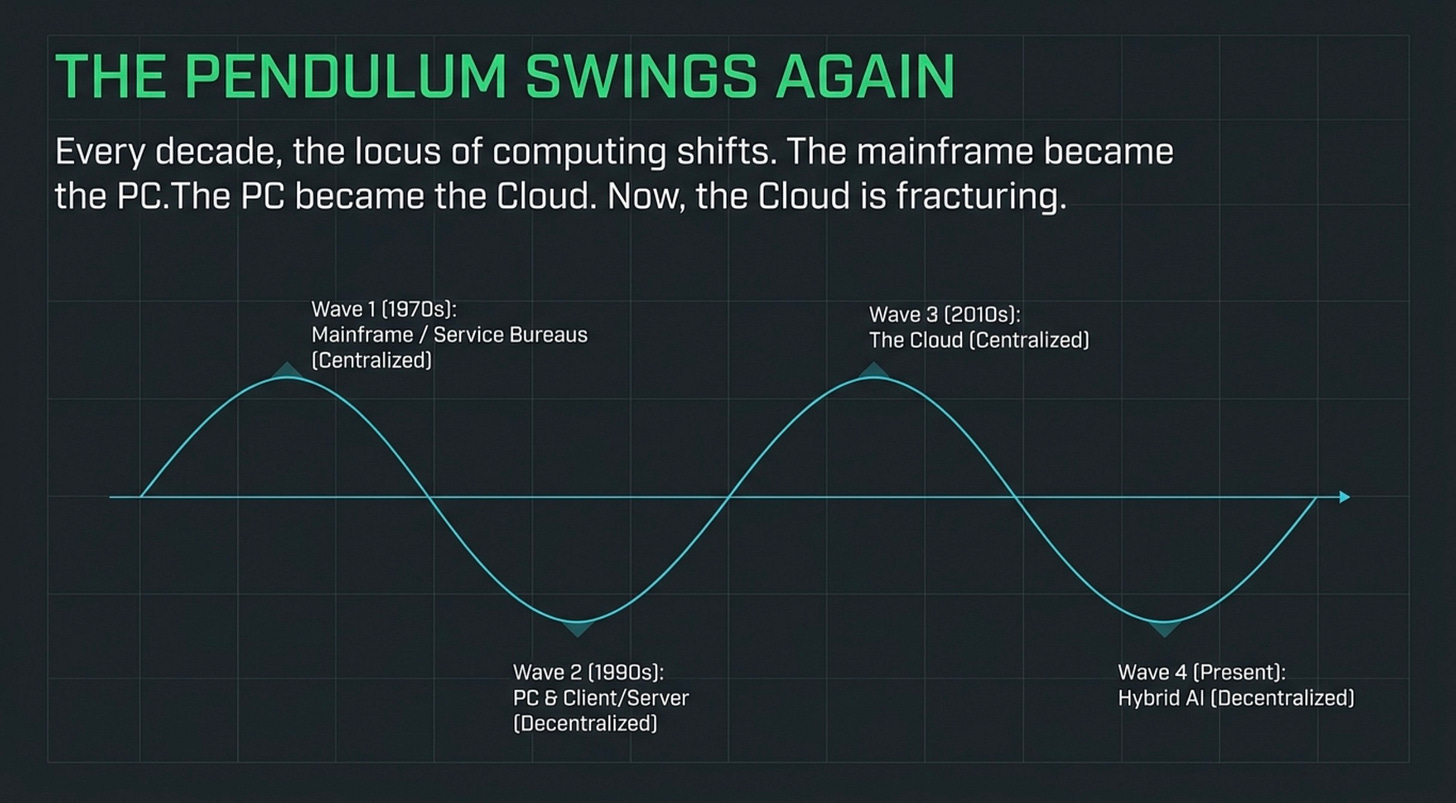

From mainframe to client server to cloud to hybrid the cycle continues.

Author’s Note about the use of AI in this blog

This is a direct note from the author. I am writing this with no assistance from AI. This blog is a part of series about architectural decisions you need to make to manage AI in the future. In a 45 years I’ve been in technology. I have seen every hype cycle come and go. At the end of each cycle there’s always something left over that becomes part of the daily IT world until the next cycle comes.

The article was written with assistance from Claude AI. It acted as a research assistant and an editor. I wrote the introduction and it was edited by Claude. Claude drafted some of the information about how the architecture should be design. I gave Claude further suggestions and we created the technical body. This is mostly Claude because I can’t write any better than Claude so why bother? The key to this is that I provided the base hypothesis, had Claude research that hypothesis, made several iterations to improve the hypothesis and then created the article. I wrote it myself where it required opinion and analysis and I had Claude do the technical details. I hope you find it useful.

Introduction

When I was 13 years old, back in 1968, the teacher brought a teletype into the classroom. It was some magic device connected to something called a mainframe somewhere far away. We could type questions into it and it would give us answers. I was amazed, but I really didn’t think anything of it. I wonder if I’d told my past self that one day I’d be the CEO of a company that specialized in computer communications, if he would have believed it.

For the next decade, the mainframe ruled. Then something changed. I was working for the City of Hialeah in the early 80s. My boss put a box on my desk and said, “This is a word processor. Figure out how to make it work.” Move forward ten years and everybody had a computer on their desk, and many of the mainframe applications had been pushed into something called the client/server model. The mainframe lived, but it had absolutely zero glamour.

Over the years, I left public service and entered the high-tech business. By the early 2000s, I was CEO of a company that specialized in data networking. The advent of the internet provided ample opportunities for companies like mine.

Then, somewhere around 2010, the cloud appeared. The cloud was a large compute system in a data center with multiple connections to the worldwide network. Suddenly everybody wanted to go to the cloud — because managing networks, the rapid pace of software development, and the variability of demand created a need for flexible systems that could grow and adapt.

Basically, the cloud was an evolved version of a mainframe, and you paid for it by usage. That billing model wasn’t much different from the mainframe service bureaus of the 70s. The cloud became so popular that I built one. We specialized in unified communications. The mainframe had become glamorous again. They put lipstick on it, called it the cloud, and started charging people for it.

When I sold my company in 2016 and exited in 2017, we were beginning to hear the first warnings about the advent of AI. AI became the ultimate expression of the cloud — gigantic data centers filled with impossibly large amounts of compute power, charged on a usage basis, interconnected to the entire global network.

But things are changing again. There’s now a movement for organizations to pull some of their compute needs off the cloud and back into their own infrastructure. That trend is now entering the AI space. This is the fourth time in my career I’ve watched this pattern play out, and I’ve learned to recognize the early signals.

The cycle continues — and if history is any guide, the companies that recognize the shift early will capture disproportionate value while the ones that don’t will spend the next decade catching up.

-----

What Actually Changed

The technical landscape shifted in 2025 and 2026 in ways most executives haven’t fully registered yet. Apple’s M5 Pro and M5 Max chips embedded Neural Accelerators directly inside every GPU core, allowing models with tens of billions of parameters to run on a laptop. Qualcomm shipped the Snapdragon X2 Elite with an 80 TOPS NPU. AMD’s Strix Halo platform paired a 60 TOPS NPU with up to 128GB of unified memory, and AMD published an engineering write-up in April 2026 about running a trillion-parameter model on a local cluster. Intel’s Panther Lake chips reached 172 total AI TOPS when combining CPU, GPU, and NPU. Microsoft mandated a 40 TOPS minimum for Copilot+ PC certification, forcing every Windows OEM to ship NPU-equipped hardware whether they wanted to or not.

At the same time, the open-weight model ecosystem matured. Meta’s Llama, Alibaba’s Qwen, Mistral, DeepSeek, and others released frontier-class models that anyone can download and run. The combination — capable hardware plus capable open weights — crossed the threshold where local inference is genuinely competitive with cloud APIs for most business workloads.

This isn’t a roadmap. It’s shipping product. The strategic question is no longer whether this happens but when your organization will be ready for it.

The Three Architectures Your Organization Will Actually Run

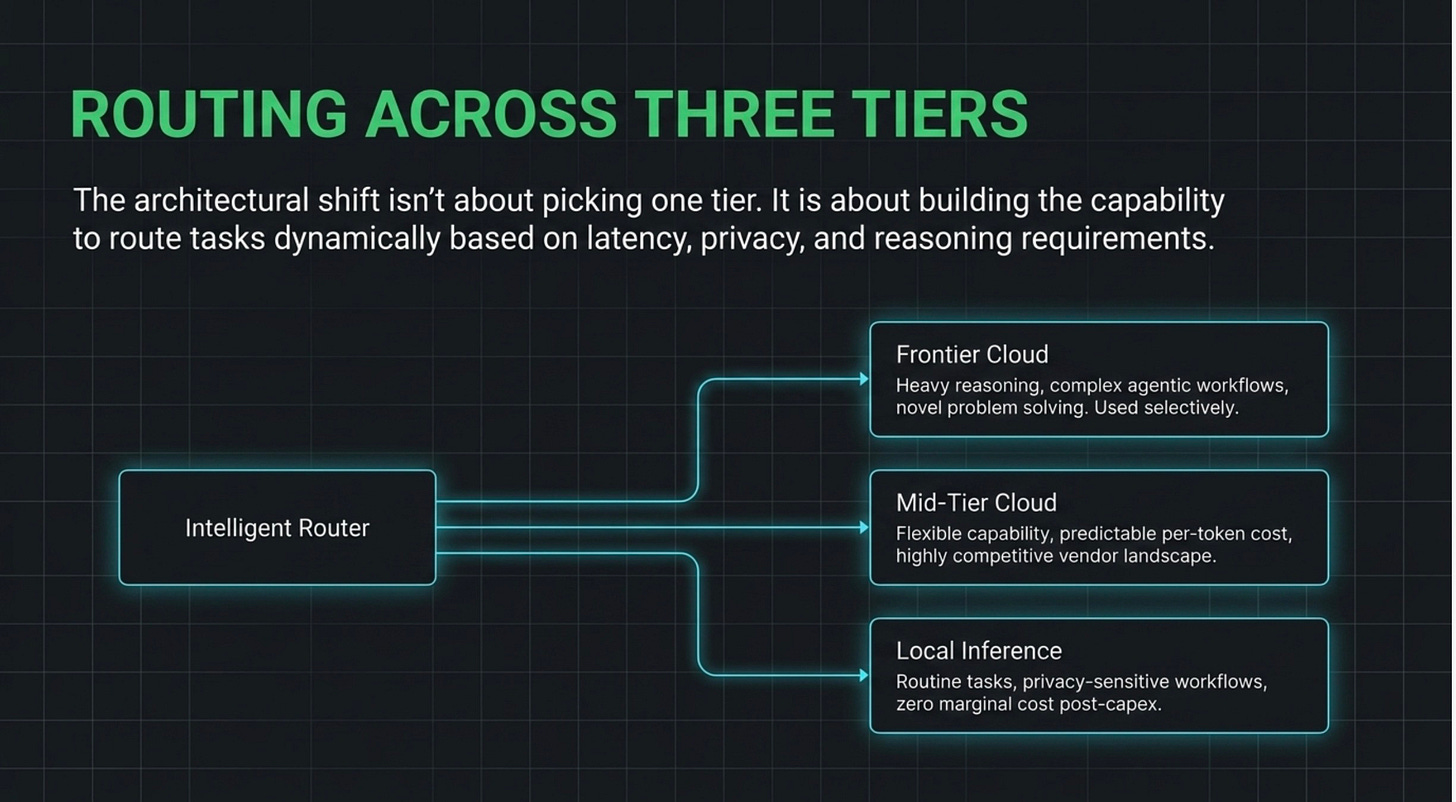

Most organizations today run 100% of their AI workload at the most expensive tier — frontier cloud APIs — because that’s the only thing they know how to access. The future isn’t choosing one tier. It’s intelligently routing across three.

**Local inference** handles routine tasks, high-frequency workflows, anything privacy-sensitive, anything where latency matters. It runs on company-owned hardware. Marginal cost approaches zero after the capital expense.

**Mid-tier cloud** handles tasks that need more capability than local can deliver but don’t require frontier reasoning. Predictable per-token costs, multiple competitive vendors, easy to swap.

**Frontier cloud** handles heavy reasoning, complex agentic workflows, and novel problem-solving. The most expensive tier, but where the cutting edge lives. Used selectively, not by default.

The architectural shift isn’t about which tier you pick. It’s about building the capability to route across all three based on what each task actually needs. That’s the move most organizations haven’t made yet, and it’s where the competitive advantage will be found.

The Six Business Impacts That Actually Matter

**Cost structure inverts from variable to fixed.** Today’s AI economics punish heavy users — more usage means more cost, forever. Local inference moves the bulk of routine workloads to a capital cost model. Predictable. Budgetable. Doesn’t scale linearly with usage. AI ROI calculations need to be redone. Companies still pricing AI as variable cost are going to lose to the ones running it as infrastructure.

**Vendor leverage shifts back toward customers.** When your only AI option is a single cloud API, you accept their terms. When you can run a 70B-parameter model locally and reserve the frontier providers for the 10% of tasks that genuinely need them, your negotiating position transforms. Every existing enterprise AI contract should be renegotiated within 18 months under the assumption that real alternatives exist.

**Data sovereignty becomes default rather than exception.** For healthcare, legal, and financial services, this is the unlock. Local inference means sensitive data never leaves the building. Industries that have been AI-cautious due to compliance concerns are about to enter the market in force. First movers in those industries will have 12-18 months of competitive advantage before the laggards catch up.

**Continuity and resilience improve dramatically.** Cloud AI dependency means your business stops when your provider has an outage, deprecates a model you depend on, or changes terms with 30 days’ notice. Local inference is yours, persistently, regardless of what any vendor decides. This is a board-level risk question. Critical AI workflows running on cloud-only infrastructure are a single-point-of-failure dependency that needs to be classified and managed accordingly.

**The skills your organization needs are changing.** Cloud AI required prompt engineers and API integrators. Hybrid AI requires architectural thinking — people who understand how to route workloads, manage hybrid orchestration, build evaluation systems that work across model types. Hiring profiles, training investments, and consulting partnerships need to shift now, not after the transition is complete.

**Competitive moats based on AI access are about to evaporate.** Today, a small competitor without an enterprise AI contract is at a real disadvantage. Tomorrow, that same competitor with a Mac Studio and the right architecture is operating at near-parity for most tasks. If your competitive position depends on superior AI access, it’s about to erode. If it depends on superior AI integration, it’s about to be reinforced.

The Organizational Readiness Question

This is where the technical writers stop being useful. They can tell you how to install Ollama or which model to download. They cannot tell you how to integrate this into a company that has finance, HR, IT, legal, and operations functions that all need to be aligned.

There are five organizational readiness dimensions that will determine whether your company captures the upside of this transition or watches it pass by.

**Governance.** Who owns AI architecture decisions? What’s the approval process for new models, new vendors, new local deployments? Most companies have not defined this. The result is that AI proliferates through the organization in unmanaged ways, with each department picking different vendors and different tools, and nobody able to answer basic questions about what’s running where.

**Procurement.** How do you buy hybrid AI capability when half of it is hardware capex and half is cloud opex? Your finance team’s existing categories don’t map cleanly. Approval workflows designed for SaaS subscriptions don’t accommodate $20,000 workstation purchases. Approval workflows designed for hardware don’t accommodate per-token API billing that varies wildly month to month.

**Risk management.** How do you classify AI workloads by criticality? Which can run on local inference only, which need cloud redundancy, which require multi-vendor diversity for resilience? These are not technical questions. They are risk decisions that require management judgment.

**Compliance.** How do you document AI usage for SOC 2, HIPAA, or GDPR audits when the model running on a developer’s laptop is doing real work on real customer data? Your existing compliance frameworks were written for cloud AI. They probably don’t address local deployment at all.

**Workforce.** Who in your organization needs to understand what about AI? The technical depth required varies enormously by role, and most companies are either over-investing in training the wrong people or under-investing across the board.

The technical transition is going to happen whether your organization is ready or not. The competitive question is whether your organization can absorb and operationalize it faster than your competitors. This is exactly the same dynamic that played out with PCs in the 1980s and the internet in the 1990s. Same playbook, faster timeline.

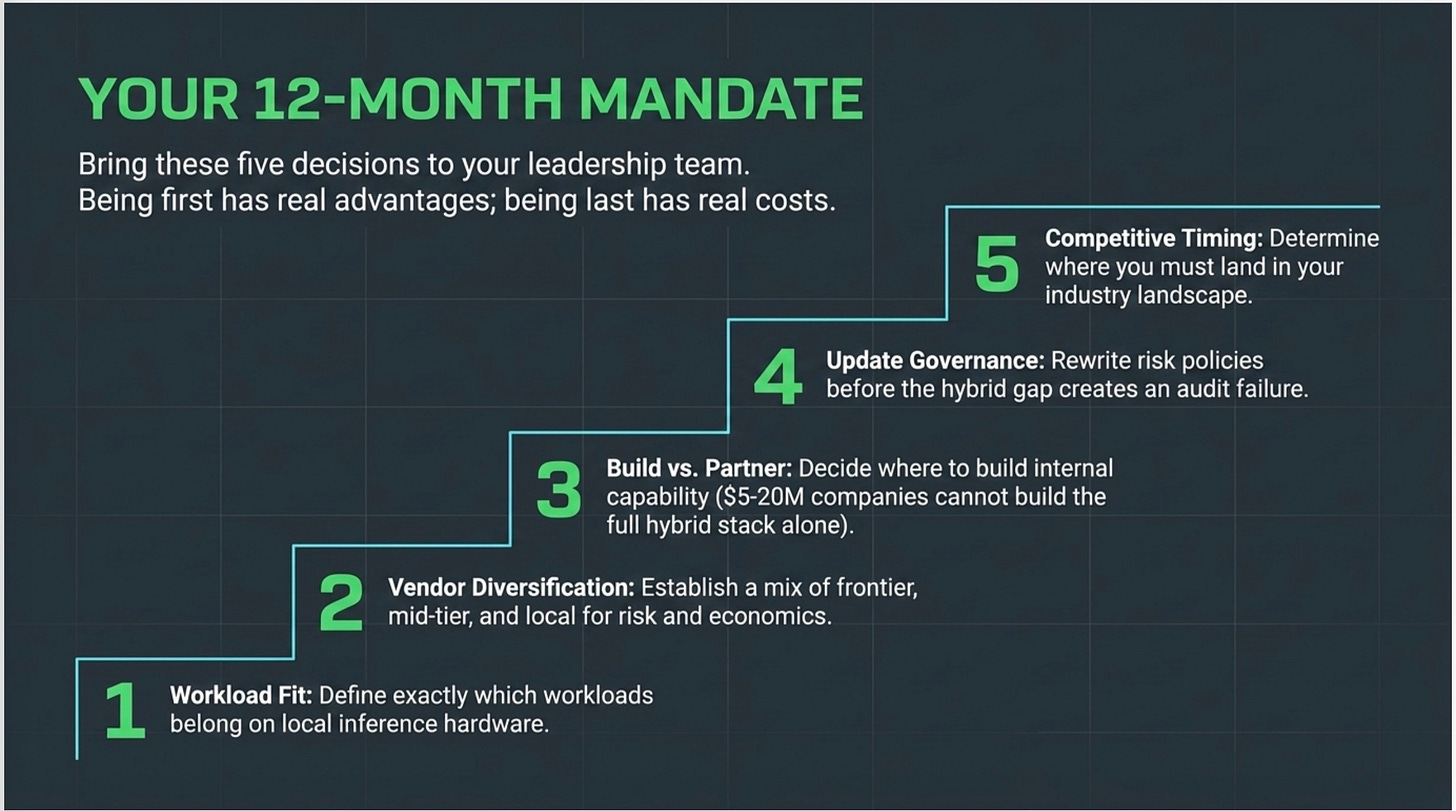

The Five Decisions You Need to Make in the Next 12 Months

Take this back to your leadership team. These are the questions that need answers before the end of next year.

**Where does local inference fit in our architecture?** Not “do we use it” — that question will answer itself. The strategic question is which workloads, on what hardware, with what governance.

**What is our vendor diversification strategy?** Cloud-only is no longer the only option. What mix of frontier cloud, mid-tier cloud, and local makes sense for your risk profile and economics?

**What capabilities do we build internally versus partner for?** The full hybrid stack is too complex for most $5-20M companies to build alone. Where do you invest in internal capability and where do you bring in expertise?

**How do we update our governance and risk frameworks?** Existing policies were written for cloud AI. They probably do not address local deployment, model routing, or evaluation requirements. They need to be updated before the gap creates a problem, not after.

**What is our competitive timing?** Being first in your industry has real advantages. Being last has real costs. Where in your competitive landscape do you need to be?

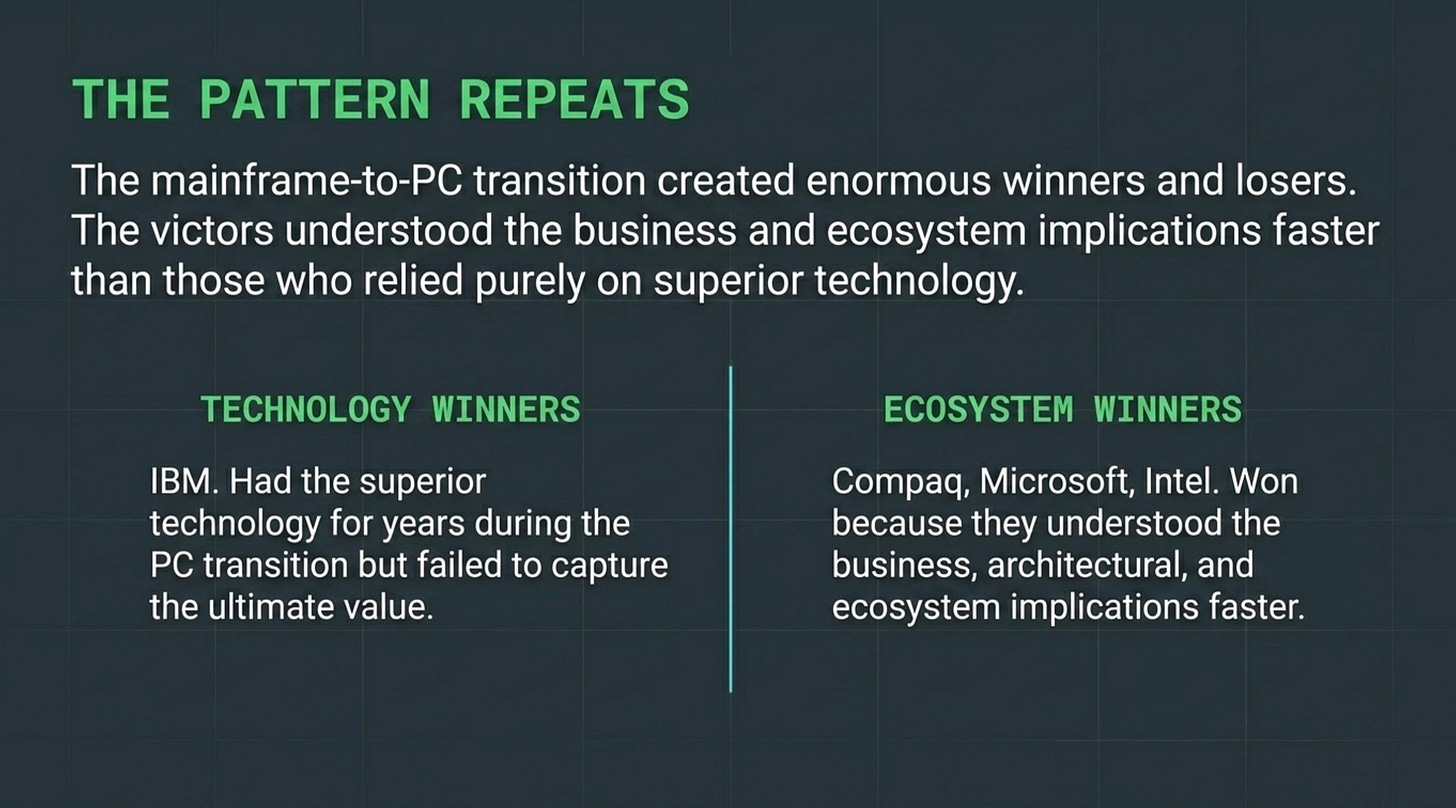

The Pattern, Restated

The mainframe-to-PC transition created enormous winners and enormous losers, and the determining factor was not who had the best technology. It was who managed the organizational transition most effectively. IBM had better technology for years. Compaq, Microsoft, and Intel won because they understood the business and ecosystem implications faster.

The same will be true here. The companies that win the hybrid AI transition will not be the ones with the most sophisticated models. They will be the ones whose management teams recognized the architectural shift early, built the organizational capability to operate across the hybrid, and made better strategic decisions about where intelligence should live in their business.

That is a management challenge, not a technical one. It is also exactly the question Tech Wolves AI Advisory exists to help you answer.